The rapid rise in the number of sensors that are being integrated into the current generation of embedded designs, as well as the integration of low-cost cameras and displays, have opened the door to a wide range of new intelligence and vision applications. This embedded vision revolution has forced designers to carefully re-evaluate their processing needs.

Data-rich video applications are driving designers to reconsider their decision to use a particular applications processor (AP), ASIC, or ASSP. In some cases, large software investments in existing APs, ASICs, or ASSPs and the high startup costs for new devices prohibit replacement. In this situation, designers are looking for co-processing solutions that can provide the added horsepower required for these new, data-rich applications without violating stringent system cost and power limits.

Additionally, the widespread adoption of low-cost, mobile-influenced MIPI peripherals has created new connectivity challenges. Designers want to take advantage of the economies of scale the latest generation of MIPI cameras and displays offer. But they also want to preserve their existing investment in legacy devices. In this rapidly evolving landscape, how can designers address the growing interface mismatch between sensors, embedded displays, and APs?

Designers need highly flexible solutions that offer the logic resources of a high performance, “best-in-class” co-processor capable of the highly parallel computation required in vision and intelligence applications, as well as high levels of connectivity and support for a wide range of I/O standards and protocols. And ideally, the solution should offer a highly scalable architecture and support the use of mainstream, low-cost external DDR DRAM at high data rates. Finally, the device should be optimized for both low-power and low-cost operation, and offer designers the opportunity to use industry-leading, highly compact packages.

FPGAs are viable solutions to overcome embedded design co-processing and connectivity challenges. Following are application examples at the edge in industrial, consumer, automotive, and machine learning areas, where FPGAs solve the above mentioned challenges.

Vision Processing in Industrial Applications

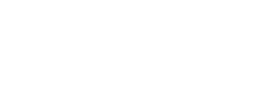

In the industrial arena, FPGA-based co-processing can play an important role by reducing the computational load on the AP, ASIC, or ASSP in video camera, surveillance, and machine vision applications. Figure 1 depicts a typical industrial video camera application. Here, the FPGA sits between an image sensor and an Ethernet PHY. The image sensor inputs the image data stream to the FPGA, which then performs image processing functions or image compression using H.264 encoding. The FPGA’s on-chip Embedded Block RAM (EBR) and DSP blocks enable high performance Wide Dynamic Range (WDR) and image signal processing (ISP) capabilities. Finally, the FPGA sends the image out to the Ethernet network.

In addition to image processing and compression, the FPGA can also perform video-bridging functions if the type or number of available interfaces on the AP does not match the needs of the image sensor or sensors.

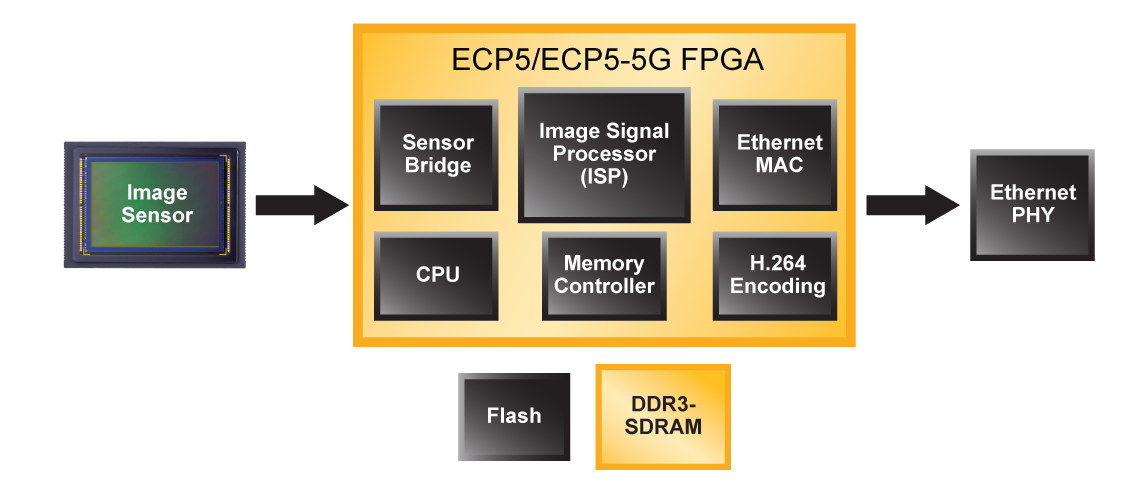

Other than general industrial camera applications, machine vision, a more specialized camera application in the industrial space, can also benefit from FGPA connectivity and co-processing capabilities. The block diagram below in Figure 2 illustrates the wide variety of roles FPGAs can play in a typical industrial machine vision system. On the camera side, FPGAs can serve as a sensor bridge, act as a complete camera ISP, or perform custom functions to help system designers achieve end-product differentiation. On the frame grabber board, FPGAs can handle video interface and image processing functions.

Intelligent Cameras for Traffic and Surveillance

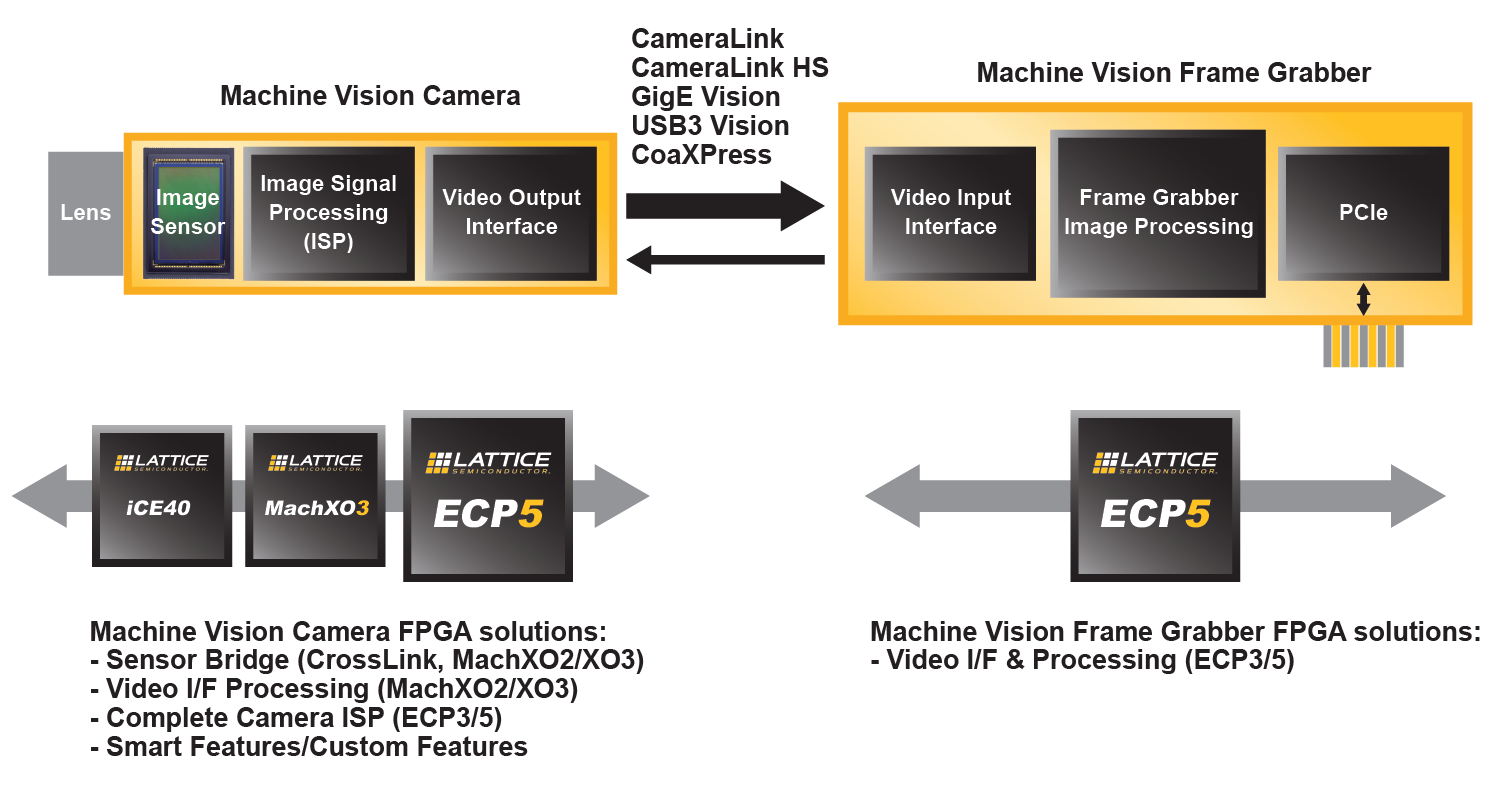

Intelligent traffic systems (ITS), including traffic flow monitoring, traffic violations identification, smart parking, and toll collection, are a key part of the vision of smart cities. Such systems typically require intelligent traffic cameras that can accurately detect many aspects of a vehicle, such as license plates, even in harsh environments, to perform video analytics at the edge, rather than sending raw video streams back to the cloud. An AP alone often cannot meet the real-time processing requirements while staying within the system’s power budget. FPGAs, acting as a co-processor to the AP, can enable efficient real-time processing at the edge required by such systems.

In addition to ISP functions, the FPGA can also perform video analytics functions to further off-load compute-intensive functions from the AP, resulting in lower system power and higher real-time performance. An intelligent camera would utilize the FPGA for object detection, image processing, and image enhancement. The object detected, for example, can be faces in a surveillance camera, or license plates in a traffic camera.

In the intelligent traffic camera example shown in Figure 3, the FPGA detects the vehicle license plate from an image received from the sensor, and performs image enhancement to generate clear images even in low-light or strong backlight conditions—by using different exposure settings for the object (license plate) vs. the background (rest of the image). It then fuses the object and the background images to create a clear picture. The FPGA’s object detection result is input into the analytics algorithm running on the AP. By off-loading the most compute-intensive steps in the analytics algorithm into the FPGA’s parallel-processing fabric, the intelligent camera is able to increase performance while maintaining power efficiency.

Immersive AR and VR in Mobile Systems

As the market need for augmented reality/virtual reality (AR/VR) environments continues to grow, current head-mounted display (HMD)-based systems witness performance issues running content on mobile APs. As such, performing visual-based positional tracking, required for an immersive AR/VR experience, has proven challenging on the processor. Here, the efficient parallel-processing architecture of FPGAs are well-suited for enabling positional tracking using stereo camera and LED markers. The FPGA offers lower latency and increased power-efficiency image processing compared to an AP. The programmable fabric and I/Os of the FPGA also allow system designers to easily choose and source the camera sensor from different vendors based on the product requirement.

In “outside-in” positional tracking a stereo camera is placed in the room (looking outside-in from room environment toward the user) to track user movements—such as body motions and hand movements—via LED markers mounted on headset and hand controllers, as shown in Figure 4 below. The FPGA, located inside the camera unit on the tripod, uses the stereo camera data to calculate user’s position, body motions and hand movements, which is then wirelessly passed on to the mobile AP (located in the HMD) to be incorporated into the AR/VR application. The use of stereo camera provides depth perception to the algorithms running on the FPGA, enabling positional tracking in all three dimensions.

In “inside-out” positional tracking a stereo camera mounted on the user’s headset (looking inside-out from user’s vantage point toward the room environment) is used to track user’s hand movements via LED markers mounted on hand controllers, as shown in Figure 5. The FPGA, located inside the head-mounted camera unit, uses the stereo camera data to calculate user’s hand movements, which is then passed on to the mobile AP to be incorporated into the AR/VR application.

While both outside-in and inside-out systems provide an immersive experience, outside-in can provide a higher level of immersion since it can also track body motions (such are walking, running, squatting, jumping, etc.) using the LED marker on headset, there by transposing body motions in the real world into the virtual world.

In both systems, the user movements need to instantly appear in the virtual world with extremely low latency, in order for it to convey a “real” user experience. FPGA’s parallel processing capability is key to achieving this low latency. Additionally, it’s low power and small-form-factor packages are key to making this a mobile untethered experience.

Machine Learning at the Edge

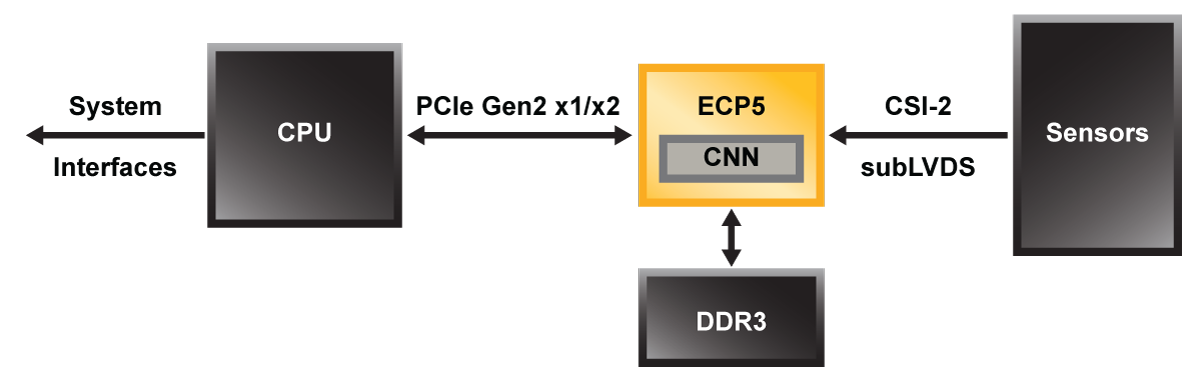

With extensive embedded DSP resources, a parallel-processing architecture that’s inherent to FPGAs, and a significant competitive advantage in terms of power, footprint and cost, FPGAs meet many of the requirements of the emerging AI market. The DSPs, for example, can compute fixed-point math at less power/MHz than GPUs using floating-point math. These characteristics offer an attractive advantage to developers of power-limited intelligent solutions at the edge. Figure 6 shows an example where the FPGA acts as an inferencing accelerator running a pre-trained Convolutional Neural Net (CNN) function on data coming from camera sensors. CNN engine running on the FPGA recognizes objects or faces and passes on the results to system CPU, thereby achieving fast and low-power object/face recognition.

Implementing design in an FPGA gives designers the flexibility to scale it up or down to meet specific power vs. performance trade-offs required by the end system. The design can achieve even lower power and fit into a smaller than 85K LUTs FPGA by trading off performance and other parameters—such as reducing frame rates, frame size of the input image, or number of bits used to represent the neural net weights and activations.

Moreover, the FPGA’s reprogrammable characteristics allow designers to meet rapidly changing market requirements. With FPGAs, as algorithms evolve, users can easily and quickly update their hardware via software. That is a capability GPUs or ASICs can’t match.

Today’s designers are constantly looking for new ways to reduce cost, power, and footprint, while adding more intelligent processing in their edge applications. At the same time, they must constantly keep pace with the rapidly changing performance and interface requirements of a new generation of sensors and displays used in edge applications. FPGAs offer designers the best of both worlds. By delivering a high-level processing capability in a small package with SERDES, and lowest power, FPGAs gives designers the co-processing and connectivity resources they require for intelligent vision systems.