The race for the fully autonomous vehicle continues. While there are a few automakers separating themselves from the pack for their unique design choices (e.g. Tesla’s refusal to use lidar) many have already seemed to reach an agreement on which sensors make up the status quo, usually including radar, CMOS cameras, and lidar.

However, this combination of sensors is not yet capable of delivering full autonomy, and despite many predictions that automakers will achieve Level-5 autonomy in cities within the next five to ten years, there are still many who call the technology a utopic endeavor. In the fervor to deliver the first fully autonomous vehicle, OEMs are starting to evaluate FIR (Far Infrared) technology as the key to unlocking Level-5 capabilities.

The flaws of radar, cameras, and lidar

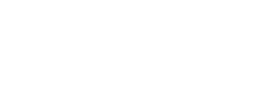

Radar, camera, and lidar are the three sensors that comprise most OEMs’ sensor suites. Such sensor redundancy is required because none of these sensors can independently provide sufficient coverage, detection, and classification (Figure 1). For example, radar is able to detect objects at a long range, but it cannot accurately identify what they are. Cameras, on the other hand, provide a much clearer image of objects, but can only do so at close range. For this reason, many OEMs outfit their autonomous vehicles with both radar and camera, hoping to achieve the full coverage and detection needed if the two sensors work in conjunction: Radar detects an object far down the road, and the camera provides a clearer image of it as it approaches.

Besides cameras and radar, autonomous vehicles chiefly rely upon lidar to find their way. Like radar, lidar works by sending out signals and using the reflection of those signals to measure the distance to an object (radar uses radio signals, while lidar uses lasers or light waves). Lidar provides a wider field-of-view than radar, making it seem an opt choice for automakers constructing their AVs’ sensor suites. However, despite efforts to reduce the cost, lidar remains, at present, very expensive for mass market use. There are companies championing more affordable lidar sensors, but these, unfortunately, are of a lower resolution and, thus, cannot deliver the complete coverage, detection, and classification needed for full autonomy.

FIR is the hidden key

With so many clear weaknesses of radar, cameras, and lidar, it’s curious why few OEMs have turned to the obvious option: FIR sensors.

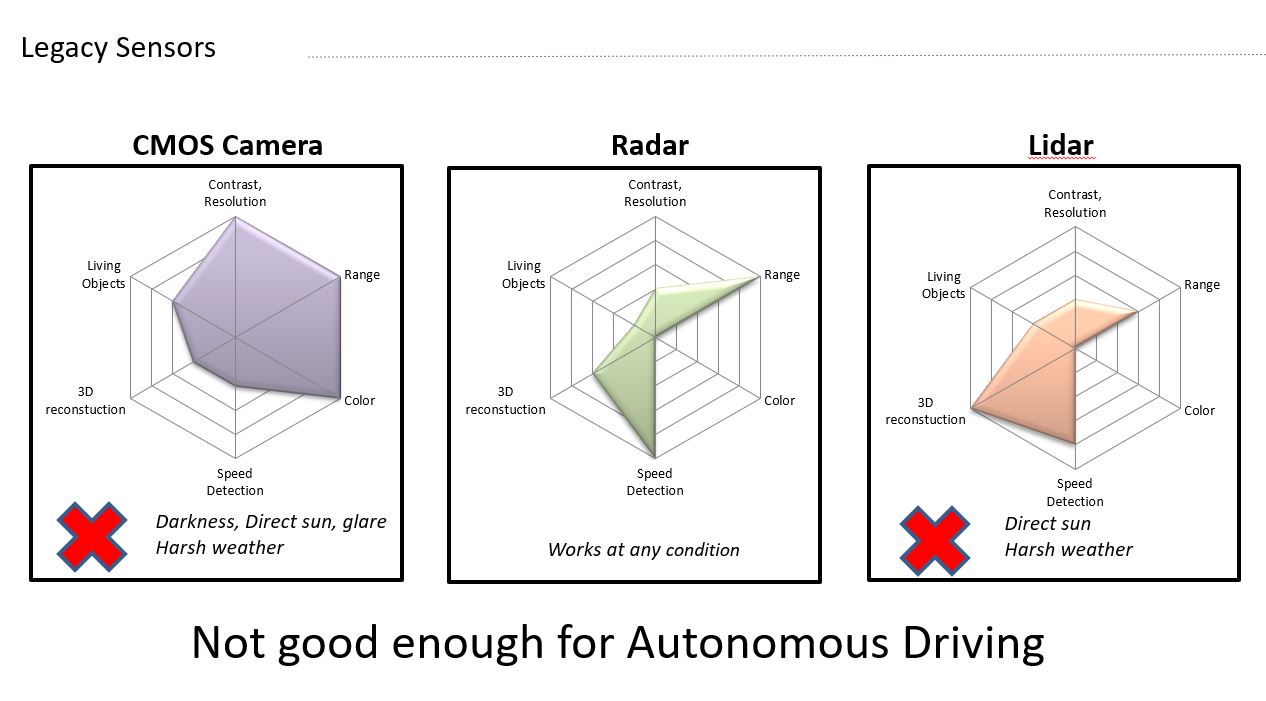

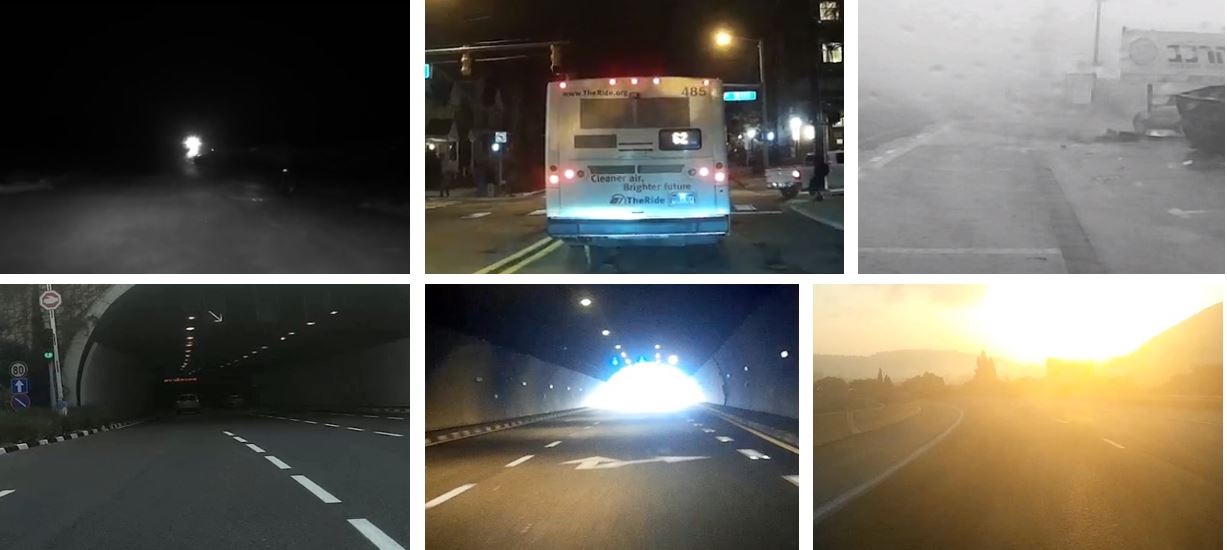

FIR has been used for decades in defense, security, firefighting, and construction, making it a mature and proven technology; it’s clear that FIR can reliably detect and classify a vehicle’s surroundings. Unlike radar and cameras that struggle to produce a clear, detailed image of surrounding objects at both near and far ranges (Figure 2), FIR accesses a different band of electromagnetic spectrum, enabling it to deliver reliable, detailed imagery of objects at a range of 200 meters away (Figure 3) . And unlike lidar, whose high costs restrict it from entering the mass market, FIR technology is presently available at a cost attainable for the mass market.

To advance the industry to the next level of autonomy, automakers must seriously consider—not replacing other sensing modalities with FIR—but adding FIR to their sensor suites. Doing so will benefit other features of autonomous vehicles’ systems and provide the complete solution needed for full autonomy.

Rich imagery improves perception

Any feature of an autonomous vehicle’s system that relies heavily on machine learning perception will benefit from the imagery a FIR sensor provides. This is because FIR provides an additional layer of information, which is needed for the vehicle’s planning and control. The rich imagery FIR provides improves perception-based tasks, such as on-road objection detection, classification, intention recognition, tracking, distance estimation, and semantic segmentation. The intense detail garnered from FIR sensors also improves an autonomous vehicle’s localization and mapping abilities, enabling tasks like SLAM (Simultaneous Localization and Mapping.)

Even though FIR offers an additional layer of rich information for autonomous vehicles, its proponents are not suggesting FIR replace all sensors as the sole means of perception, as, like other sensors, FIR does have a few limits. For example, because FIR functions by assessing an object’s thermal signature and emissivity (how effectively the object transmits heat), FIR cannot see colors. Of course, this is necessary in order for autonomous vehicles to be able to recognize and respond to traffic lights and street signs. However, when fused with a CMOS solution in a sensor suite, FIR can deliver the complete sensing capabilities needed to achieve full autonomy.

Full solution when fused with other sensors

First, consider the immediate, driveable area of a vehicle. A CMOS solution can adequately detect the lanes on the road, but it cannot reliably account for the entire driveable area. The image a FIR sensor delivers, on the other hand, is invariant—meaning, it looks the same whether the vehicle is driving at day or night —and can, thus, provide better area detection of a vehicle’s surroundings.

Because it reads an object’s thermal signature and emissivity, FIR can also accurately classify the detected objects in a vehicle’s surroundings. In fact, FIR is the only sensing solution that can identify a living object from non-living objects; this is critical for giving autonomous vehicles reliable pedestrian detection (Figure 4). Moreover, FIR sensors have the ability to accurately detect and classify pedestrians at long ranges, improving vehicles’ intention estimation. Although CMOS solutions often have a higher resolution than FIR for general object detection, their inability to immediately classify living objects renders them an insufficient solution to autonomous vehicle’s vision problems. But, by combining these two sensors into a fused solution, OEMs can achieve the best understanding of all objects (both living and non-living) in a vehicle’s surroundings.

By working with CMOS in a fused solution, FIR’s flaw in color recognition can be mitigated. In fact, an AV’s ability to react to signs is improved by a FIR/CMOS fusion: FIR sensors can effectively detect thermally homogenic regions and separate them from the background—something CMOS struggles to do. Using these two sensors in conjunction (in which the FIR sensor clearly identifies the traffic signs from the background, and the CMOS solution reads the sign), then allows autonomous vehicles to best see their surroundings.

Cost-effective

For the automotive industry, FIR technology has long remained out of reach for the mass market, as legacy companies have, until recently, reserved it exclusively for luxury brands.

Now, however, the technology is in the midst of a revolution lead by new sensor companies, like AdaSky, an Israeli startup that recently developed Viper, a high-resolution thermal camera that passively collects FIR signals, converts it to a high-resolution VGA video, and applies deep-learning computer vision algorithms to sense and analyze its surroundings (Figure 5) Viper adapts the mature FIR technology to the specific needs of the automotive market—at a price that is, in fact, more cost effective than lidar.

FIR has long been the obvious (albeit, hidden) key to achieving full vehicle autonomy. Now, in its new generation of improved functionality and reduced expense, automakers are starting to pay attention.